Apache ParquetThe Apache Software Foundation

Apache Parquet is an open-source, column-oriented data file format optimized for efficient data storage and retrieval. It supports high-performance compression and encoding schemes, making it ideal for handling complex, large-scale data across various programming languages and analytics tools.

Vendor

The Apache Software Foundation

Company Website

Product details

Apache Parquet

Apache Parquet is an open-source, column-oriented data file format designed for efficient data storage and retrieval. It is optimized for complex, large-scale data and supports advanced compression and encoding schemes. Parquet is widely used in big data ecosystems and is compatible with many programming languages and analytics tools.

Features

- Columnar storage format for efficient data access

- High-performance compression and encoding schemes

- Language-agnostic design with support for Java, C++, Python, Go, and Rust

- Rich ecosystem of tools and libraries including parquet-java, parquet-cpp, and fastparquet

- Schema evolution support for flexible data modeling

- Integration with big data platforms like Apache Spark, Hive, Impala, and Drill

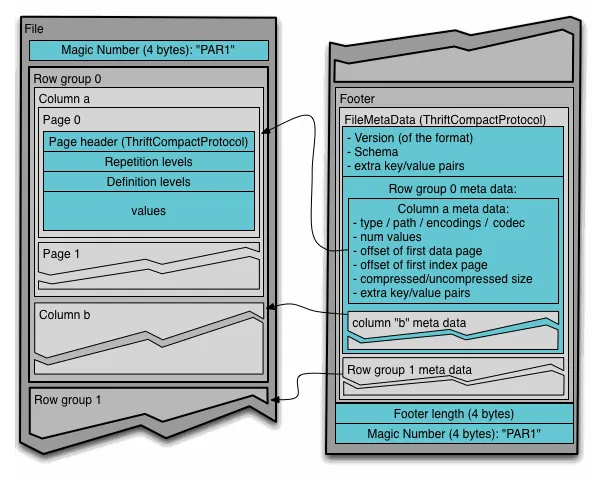

- Support for nested data structures and complex types

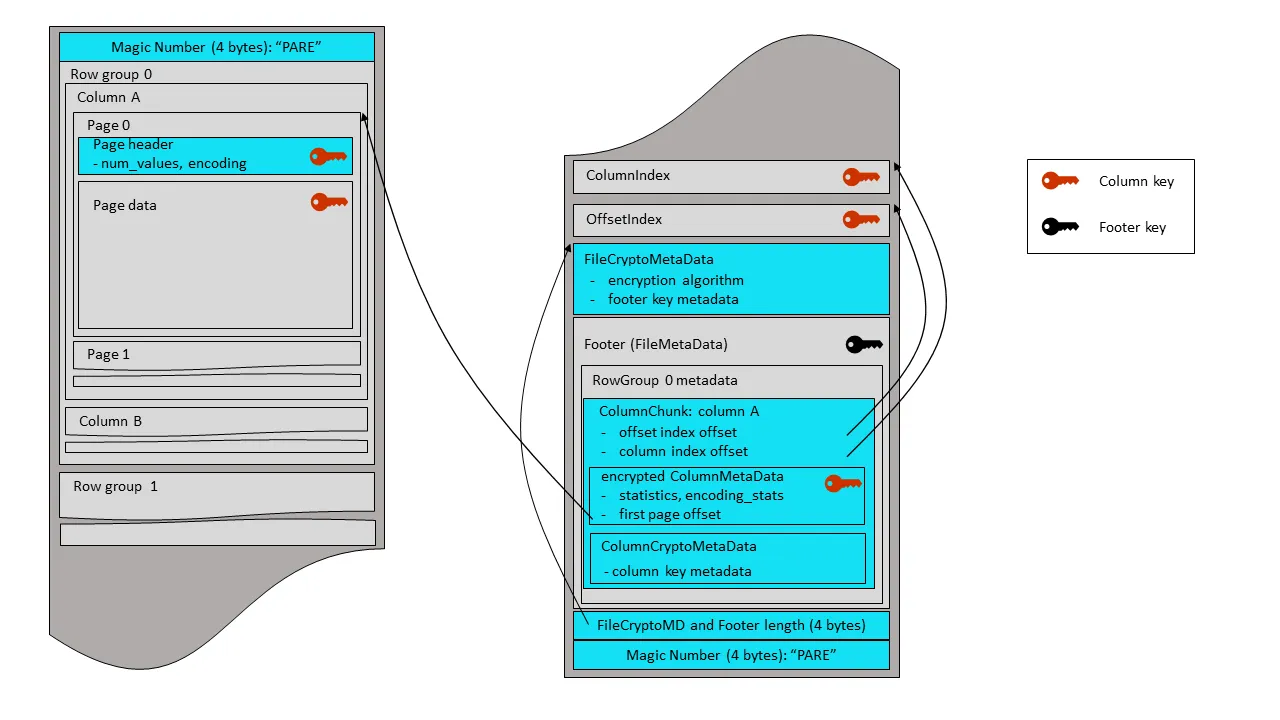

- Thrift-based metadata for interoperability

- Compatibility with cloud storage and distributed file systems

Capabilities

- Enables efficient scanning and filtering of large datasets

- Reduces I/O and storage costs through columnar compression

- Facilitates interoperability across diverse data processing engines

- Supports batch and stream processing workflows

- Allows schema definition and enforcement for structured data

- Provides tools for data import/export, conversion, and validation

- Enhances performance in analytics and machine learning pipelines

- Enables seamless integration with data lakes and warehouses

Benefits

- Improves query performance and resource utilization

- Minimizes storage footprint for large datasets

- Simplifies data exchange between heterogeneous systems

- Enhances scalability and flexibility in data architecture

- Reduces development overhead with standardized format

- Promotes consistency and reliability in data pipelines

- Supports modern data engineering and analytics use cases

- Maintains open-source governance and community contributions

Find more products by industry

Other ServicesEducationFinance & InsuranceHealth & Social WorkPublic AdministrationInformation & CommunicationView all