Apache NiFi is a powerful and user-friendly system for automating the movement, transformation, and management of data across systems. It enables secure, scalable, and real-time dataflow for cybersecurity, observability, event streams, and AI pipelines.

Vendor

The Apache Software Foundation

Company Website

Apache NiFi

Apache NiFi is a robust, scalable, and user-friendly dataflow automation platform designed to manage the movement of data between systems. It enables real-time data ingestion, transformation, routing, and delivery across diverse environments, supporting modern use cases like cybersecurity, observability, IoT, and AI pipelines. Built on flow-based programming principles, NiFi provides a visual interface for designing and monitoring dataflows, making it accessible to both developers and data engineers.

Features

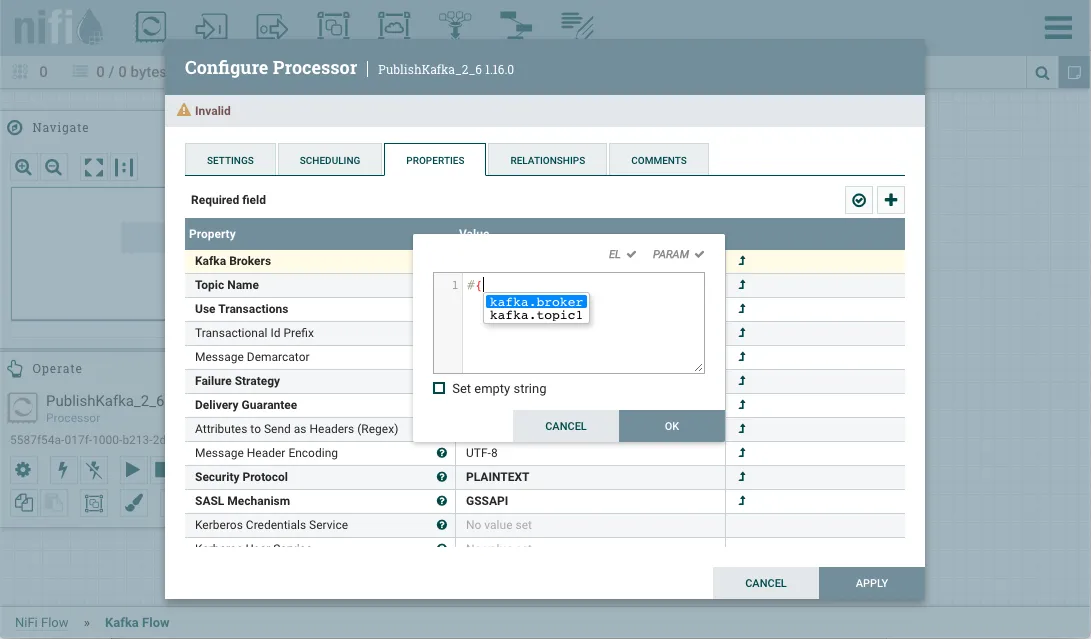

- Visual drag-and-drop interface for building dataflows

- FlowFile architecture for tracking data and metadata

- Real-time data ingestion and transformation

- Dynamic prioritization and back pressure control

- Runtime modification of flow configurations

- Secure communication via HTTPS, TLS, and SSH

- Multi-tenant authorization and policy management

- Fine-grained data provenance tracking

- Loss-tolerant and guaranteed delivery options

- Low latency and high throughput performance

- Extensive processor library for diverse data operations

- Pluggable architecture for repositories and extensions

Capabilities

- Automates data movement across heterogeneous systems

- Supports complex routing, mediation, and transformation logic

- Operates in clustered environments with zero-leader architecture

- Enables real-time monitoring and feedback through web UI

- Integrates with enterprise systems via standard protocols

- Handles structured, semi-structured, and unstructured data

- Provides full lineage tracking for compliance and auditing

- Scales horizontally for high-volume data processing

- Adapts to changing data formats and protocols

- Facilitates secure, accountable system-to-system interactions

Benefits

- Simplifies data integration across platforms

- Enhances agility in adapting to evolving data needs

- Reduces development complexity with visual flow design

- Improves reliability with fault-tolerant architecture

- Ensures data security and compliance

- Enables rapid deployment and modification of dataflows

- Supports continuous improvement in production environments

- Promotes reuse of components and modular design

- Optimizes resource usage across CPU, memory, and disk

- Provides transparency and traceability of data operations